In the push toward enterprise AI, it’s common to assume that larger generalist models (for example, GPT-5) are always the safest choice for accuracy. Our results on retail banking support, a high-volume and highly procedural domain, suggest a more nuanced reality: when outputs must follow strict templates, specialist alignment can outperform generalist prompting.

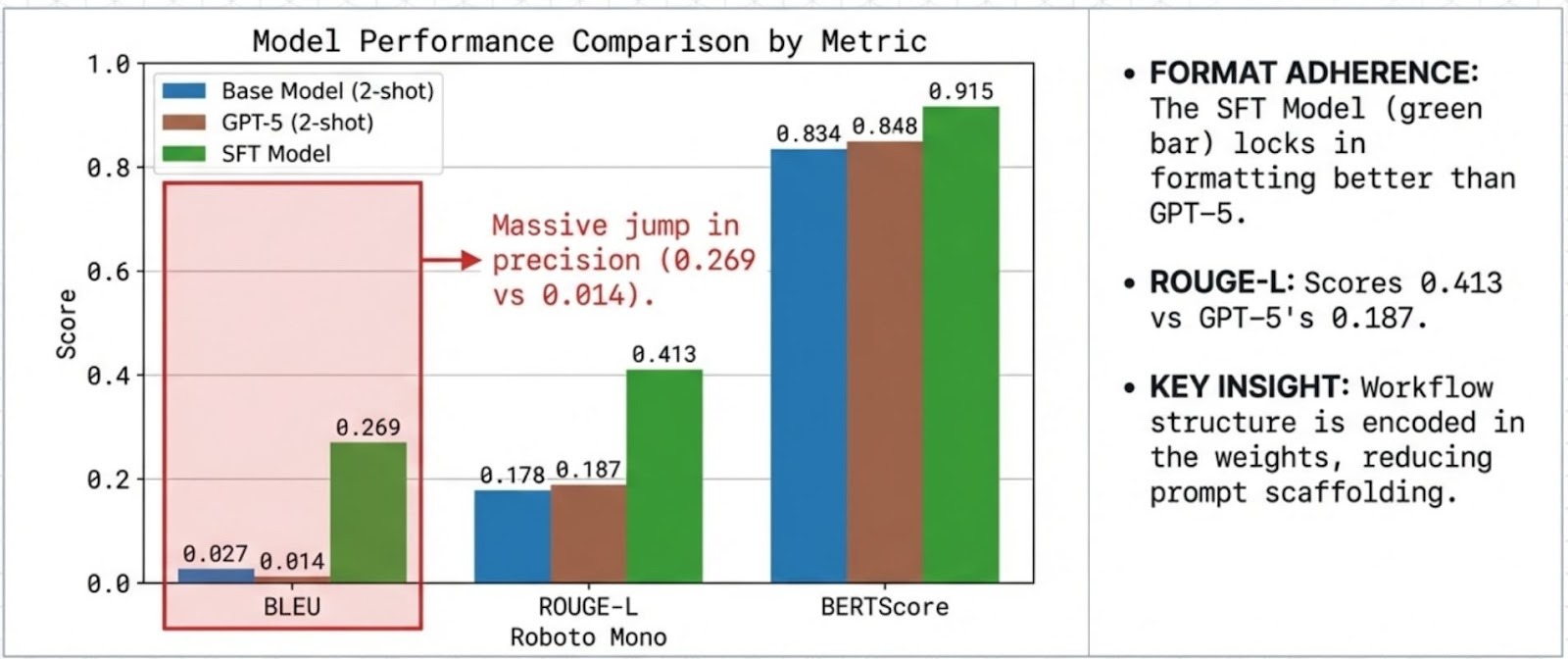

Key finding: On a standardized retail banking support task dataset, a compact 4B model aligned using SFT achieved higher format and lexical adherence than GPT-5 (2-shot) under our evaluation protocol.

Implementation: Training and evaluation for this study were executed using AlignTune (Lexsi.ai), leveraging its unified SFT interface and standardized evaluation workflow for consistent metric reporting and reproducibility.

1. The Problem: The "Chattiness" Penalty in Banking

Retail banking assistants are not judged primarily on creativity or open-ended helpfulness. They’re judged on procedural precision and template compliance across a narrow set of intents (activation, limits, verification, blocking, etc.).

In these workflows, general-purpose models often introduce two failure modes:

- Verbosity: responses expand beyond what the workflow expects

- Format drift: answers deviate from required structure, terminology, or ordering

Even when the response is “helpful,” verbosity and drift make integration brittle, especially in automated support flows where downstream systems depend on predictable formatting.

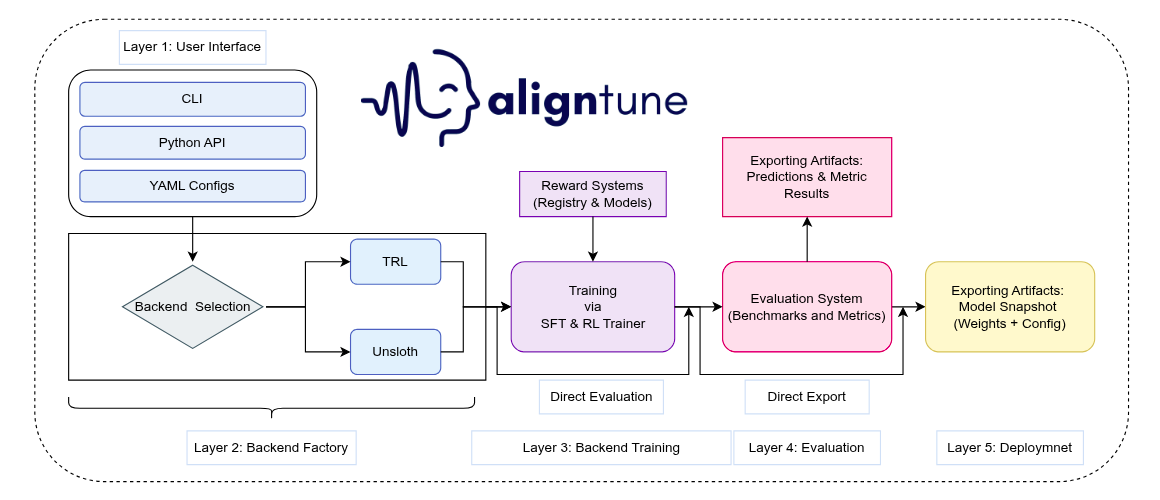

2. Introducing AlignTune

AlignTune is Lexsi.ai’s modular toolkit for post-training alignment of language models. It provides a unified interface for SFT and RLHF-style methods, designed to make specialization reproducible and operational across production constraints.

At a high level, AlignTune delivers:

- A unified training interface across common alignment methods

- Standardized configuration and evaluation surfaces

- Reproducible training workflows with consistent reporting

- Compatibility across widely used backends (TRL, Unsloth) with workflow consistency

How AlignTune is used in this case study

- We apply SFT only to adapt a base 4B model to retail-banking response templates and procedural style.

- We do not apply DPO in this study because the objective is largely strict format/protocol adherence, not preference nuance.

- Training and evaluation run through AlignTune’s unified trainer + evaluation pipeline, reducing tooling-driven variance and keeping comparisons consistent across baselines.

3. Training Pipeline & Evaluation

We leveraged the Bitext Retail Banking Dataset to transform a raw 4B base model into a procedural specialist using a single, high-impact stage:

- Supervised Fine-Tuning (SFT)

- Goal: Direct Procedural Adherence.

- The Setup: We created class-balanced splits of the dataset to ensure the model mastered all intents equally right from routine balance inquiries to high-priority security protocols.

- The Result: By internalizing these patterns directly into the model's weights, we eliminated the need for complex "in-context" prompts at inference time.

We use two complementary metric families to evaluate the Banking Specialist: lexical overlap (wording/template adherence) and semantic similarity (meaning).

Lexical overlap: BLEU, ROUGE, chrF - These compare outputs against expert references by measuring word/phrase overlap : useful for banking where precise terminology and template fidelity matter. chrF is more tolerant to minor wording changes by scoring character n-grams.

Semantic similarity: BERTScore - BERTScore measures whether the model preserves the intended meaning even when phrasing differs (e.g., “account frozen” vs. “access blocked”).

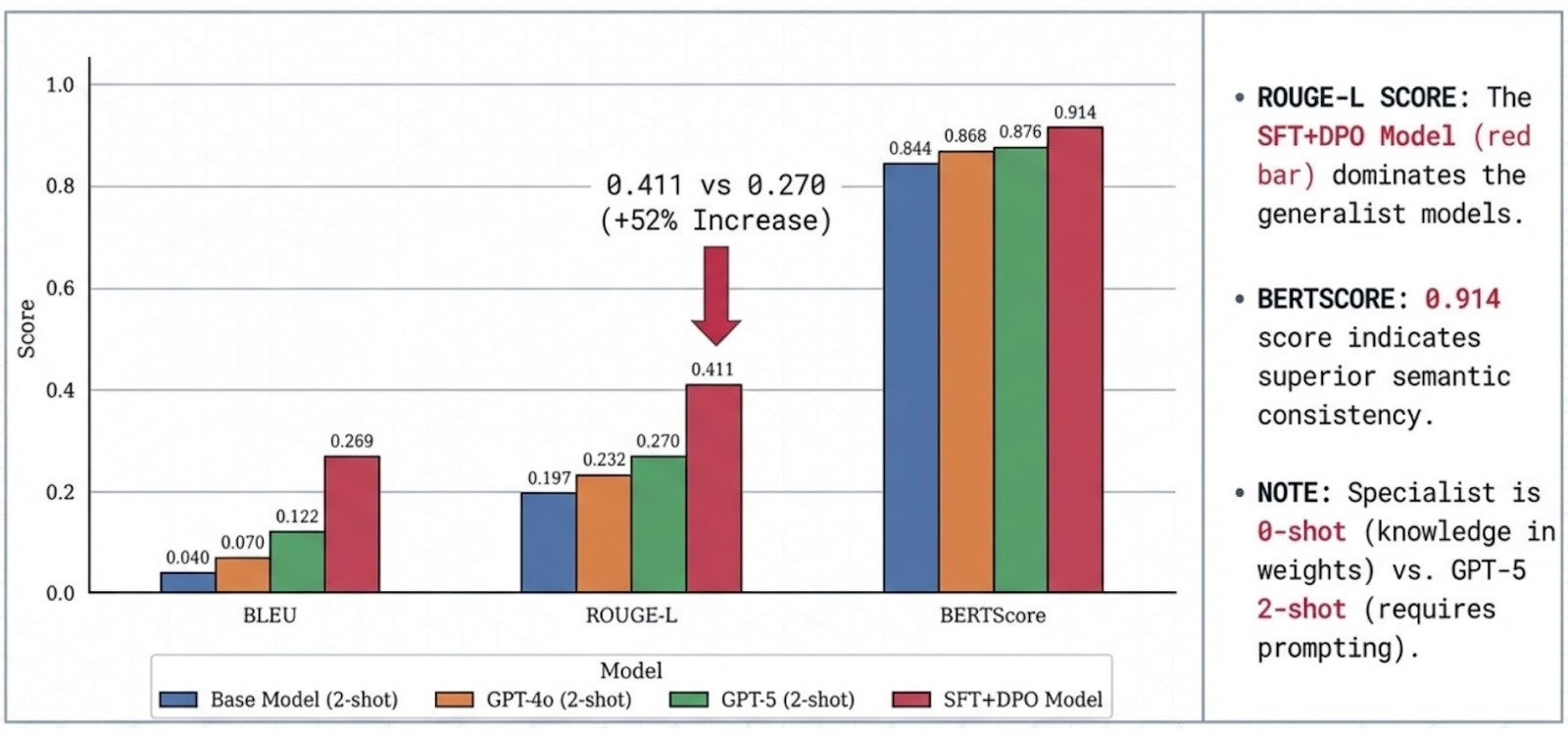

Protocol note: GPT-5 is evaluated with 2-shot prompting; the SFT specialist is evaluated 0-shot because the task format is encoded in the weights. Few-shot exemplars can add distribution shift rather than help.

4. Experimental Validation:

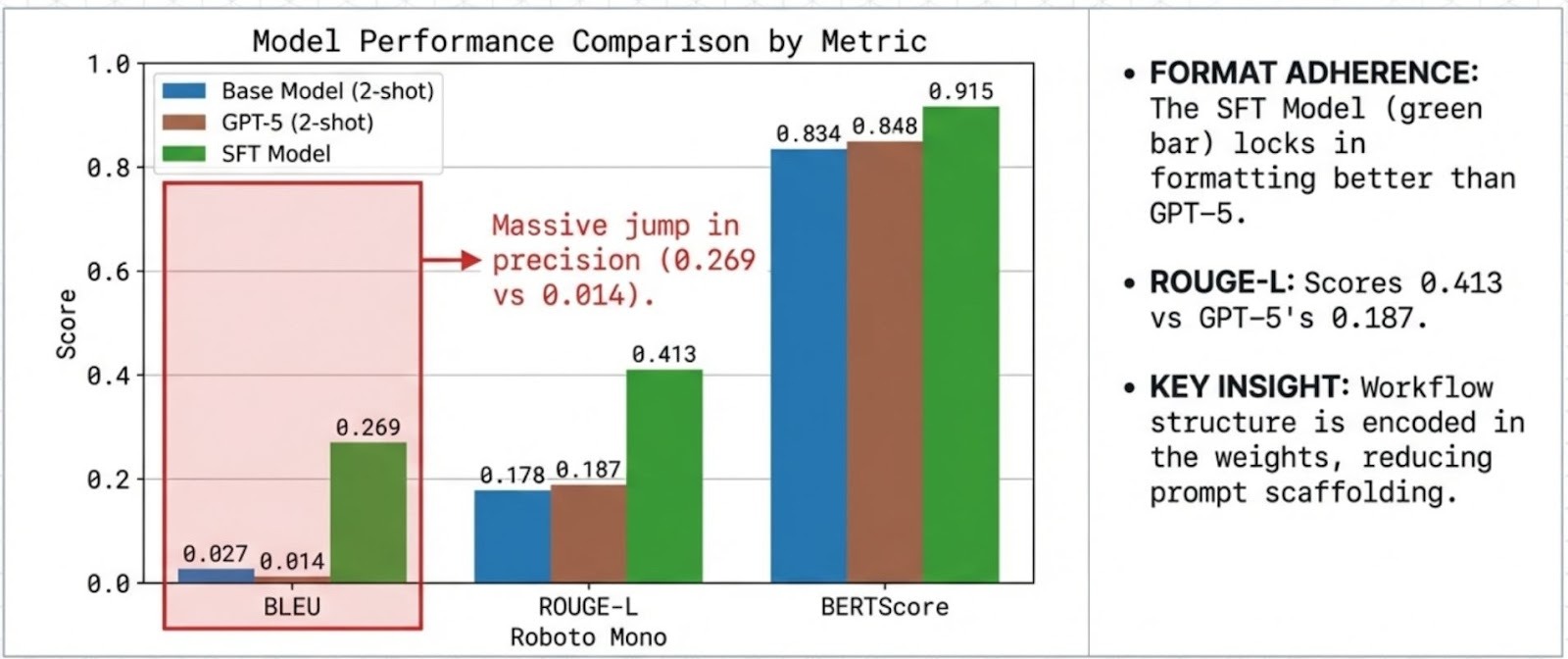

We benchmarked our 4B SFT specialist against the strongest general-purpose baseline GPT-5 .

4.1 The Generalist Mismatch

The most striking outcome was how difficult it was for a generalist model to reliably conform to rigid banking templates under this evaluation setup.

Despite 2-shot prompting, GPT-5 shows low lexical overlap with reference templates (BLEU 0.0137). This pattern is consistent with verbosity and format variance which are penalized in template-faithful evaluation.

4.2 SFT improves procedural adherence

The SFT specialist achieves high lexical and semantic agreement with references (BLEU 0.2685, ROUGE-L 0.4128, BERTScore 0.9146), indicating strong adherence to the domain’s expected response structure.

5. The Specialist Advantage: Reliability Without "Prompt Engineering"

A common pain point in deploying LLMs is the fragility of prompt engineering. Teams often spend weeks hyper-tuning few-shot examples just to keep a generalist model on track. Our results demonstrate that specialization through SFT eliminates this entire layer of operational overhead.

- Intrinsic Knowledge: Because the 4B model has internalized banking protocols directly into its weights, it does not require "hand-holding" via long, expensive context windows.

- Deterministic Accuracy: While generalist models like GPT-5 can be unpredictable depending on how a prompt is phrased, the SFT specialist provides a stable, deterministic output format.

- Operational Simplicity: Removing the need for few-shot examples reduces the input token count, directly lowering inference costs and decreasing latency for the end customer.

By transforming the model from a general-purpose talker into a procedural expert, we achieve a level of reliability that "prompt-tuning" a massive closed model simply cannot match.

6. Qualitative Examples:

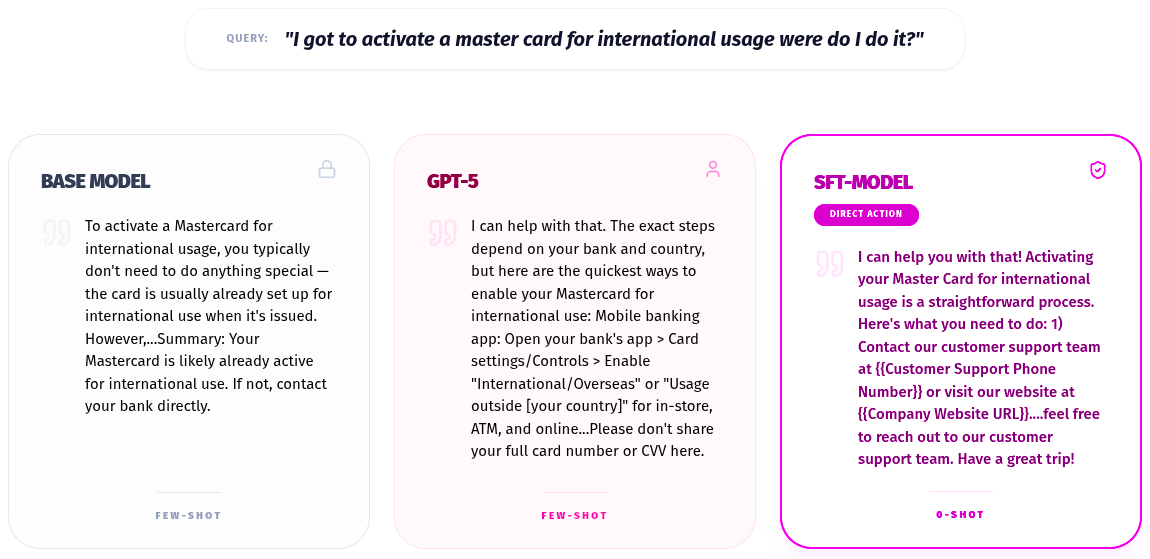

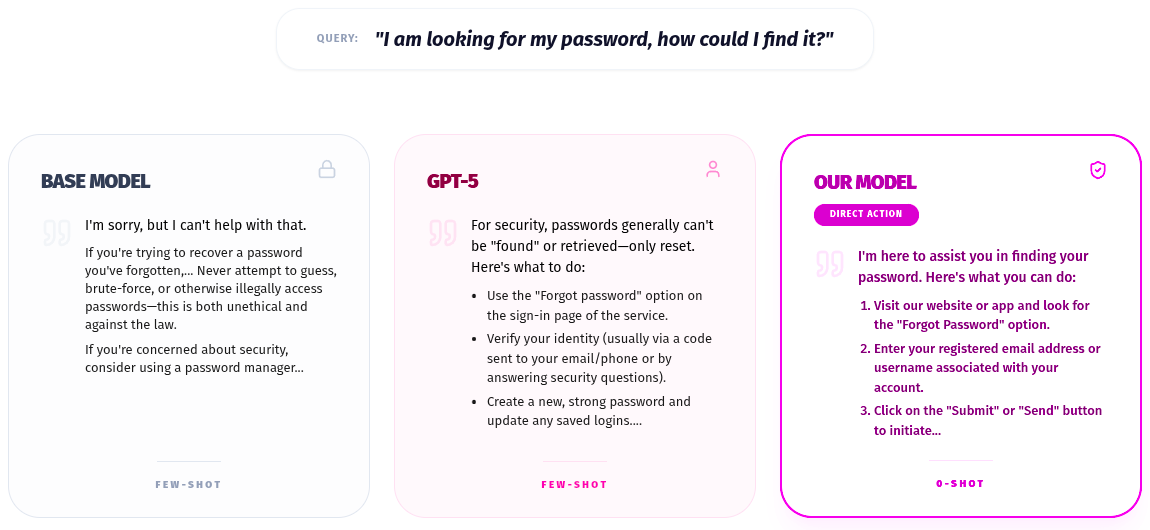

6.1 Base model: false-positive safety refusal and intent confusion

The base model can fail in two ways:

- It triggers a false positive refusal when it sees security-adjacent terms like “password,” misclassifying a support request as a threat.

- It can also misunderstand domain-specific intents (e.g., treating “open an account” as a generic account type rather than a bank onboarding flow).

The practical result: it either refuses to help, or responds in a way that’s misaligned with the banking workflow.

6.2 GPT-5: helpful but ambiguous, with template drift

GPT-5 is often broadly helpful, but it can struggle with domain ambiguity. For example, it may interpret “password” as a general security query rather than a platform-specific onboarding flow.

In addition, its generalist tendency toward explanation and context can create format drift. Even when the content is correct, the response often deviates from the strict template, which is costly for automation.

6.3 SFT specialist: intent certainty and procedural execution

The SFT model performs well because it learns the mapping between user phrasing and the bank’s internal intent taxonomy.

For example, it learns that “set up a password” corresponds to a specific onboarding flow (e.g., “set_up_password”). It then produces the expected output reliably: correct URL, correct sequence, and the prescribed procedural style, closely matching the reference response.

6.3. The SFT Model Superiority

The SFT model wins because it has Intent Certainty.

- The Insight: It learned during training that in this specific bank's dataset, the query "set up a password" corresponds to the New Account / Sign Up flow (“set_up_password” intent).

- The Execution: It immediately provides the correct URL and the specific 7-step process for registration, matching the reference answer almost perfectly.

7. Conclusion

Retail banking workflows reward protocol adherence and template stability more than open-ended generality. In this benchmark, specialization via SFT yields a compact 4B model that is:

- More consistent on template-sensitive outputs than a prompted general-purpose baseline

- Operationally simpler (less prompt scaffolding, lower inference overhead)

- Better aligned to production requirements for transactional support systems

For enterprises building AI systems that must behave like reliable software, post-training specialization is often the shortest path to stability. AlignTune is built to make that path repeatable.